Note that to create pipeline function which is not included in this slide, will contain your TFX code. You simply need to include the proper imports and call n. TFX includes an Airflow DAG runner for running your TFX pipelines via Airflow. If you have already written your ML pipeline using TFX, then you don't need to do much. It is possible that the task will be run twice for this reason, and you will want to ensure that this will not cause any problems in your workflow. The pod will have to run to completion, despite any Airflow worker shutdown or restart. The Complete Hands-On Introduction to Apache Airflow Our Best Pick, 8459+ 2. In either case, you want to be sure that your containerized tasks are item pod. 5 Best Apache Airflow Courses, Training, Classes & Tutorials Online 1. Using this operator, you can specify another GKE cluster on Google Cloud, and run your containerized task inside a pod there. And this could interrupt our other workloads. Note that this is not generally recommended because it can lead to resource starvation in the cluster. KubernetesPodOperators allow us to run the container inside a pod on our Composer GKE cluster. If we have task with non Py-PI dependencies, or if the task are already containerized, we can run the containers as Airflow tasks. > By enrolling in this course you agree to the Qwiklabs Terms of Service as set out in the FAQ and located at: <<< View Syllabusįinally, let us say a few words about how we can use Airflow, and Cloud Composer to orchestrate container based workloads and TFX pipelines. You have completed the MLOps Fundamentals course. You have completed the courses in the ML with Tensorflow on GCP specialization (or at least a few courses) You have a good ML background and have been creating/deploying ML pipelines

Please take note that this is an advanced level course and to get the most out of this course, ideally you have the following prerequisites: And finally, we will go over how to use MLflow for managing the complete machine learning life cycle. These 2 inch deep filters (compared to typical 1 inch) provide greater surface area for filtration, better air flow, quieter operation, and longer filter.

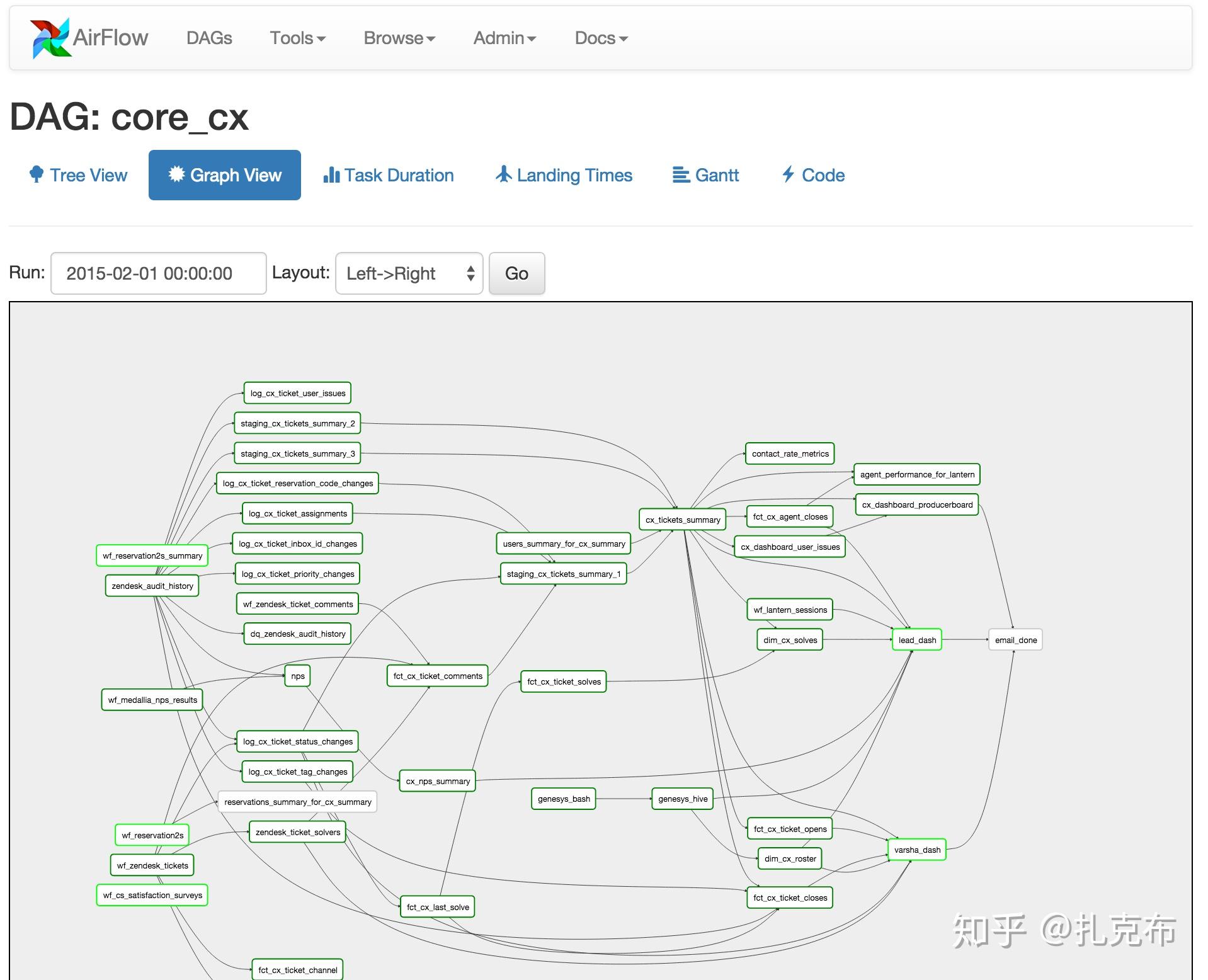

You will also learn how to use another tool on Google Cloud, Cloud Composer, to orchestrate your continuous training pipelines. In this setup, we run SequentialExecutor, which is ideal for testing DAGs on a local. Then we will change focus to discuss how we can automate and reuse ML pipelines across multiple ML frameworks such as tensorflow, pytorch, scikit learn, and xgboost. Apache Airflow Tutorial Basic setup using a virtualenv and pip. You will also learn how you can automate your pipeline through continuous integration and continuous deployment, and how to manage ML metadata. You will learn about pipeline components and pipeline orchestration with TFX. The first few modules will cover about TensorFlow Extended (or TFX), which is Google’s production machine learning platform based on TensorFlow for management of ML pipelines and metadata. In this course, you will be learning from ML Engineers and Trainers who work with the state-of-the-art development of ML pipelines here at Google Cloud.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed